🚀 Diving into the MLOps Tech Stack 🚀

Atul Yadav

2 min read

March 30, 2024

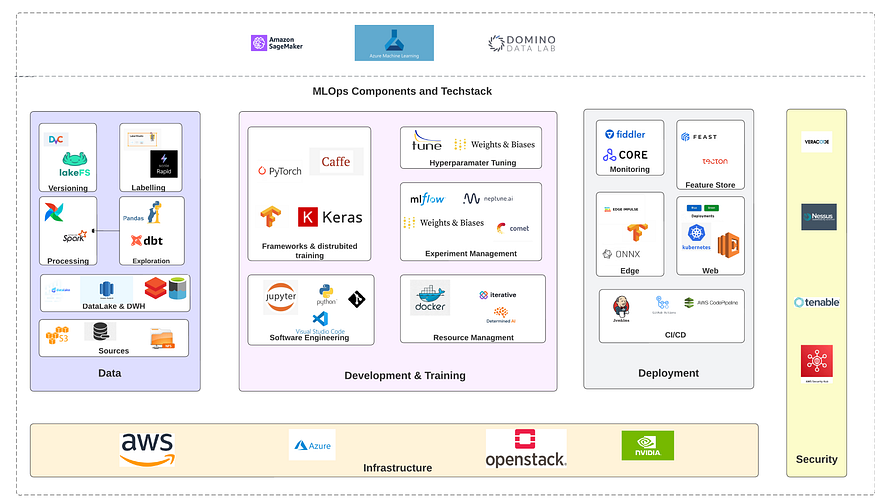

🌐 Introduction to MLOps:

MLOps is the integration of machine learning with best DevOps practices. It streamlines the ML lifecycle, including model development, training, deployment, and monitoring. By automating these processes, teams can deploy ML models more efficiently.

🧰 Key Components of the MLOps Stack:

1. Model Development & Training:

- Amazon SageMaker: A comprehensive AWS service that simplifies the building, training, and deployment of ML models.

- Frameworks: Tools like

Caffe,PyTorch, andKerasoffer extensive libraries for developing ML models. - Jupyter: Widely used IDE for Python, perfect for interactive ML development.

- Hyperparameter Tuning: Utilize tools like

tuneto optimize your model’s performance.

2. Collaboration & Versioning:

- Domino DA & iterative: MLOps platforms that bolster collaboration and automate ML processes.

- lakeFS: Offers version control for data lakes, ensuring changes are traceable.

- Labelling: Essential for annotating data, which in turn, trains ML models.

3. Data Management:

- Pandas: Esteemed Python library for efficient data manipulation.

- dbt: Assists in building consistent data pipelines.

- DataLake & DWH: Robust storage solutions to handle vast data quantities.

- FEAST: A feature store to streamline feature management in ML projects.

4. Deployment & Monitoring:

- docker: Ensures consistent application packaging and deployment.

- Weights & Biases: A dual-purpose platform for experiment tracking and model oversight.

- CORE Monitoring: Monitors the performance of production-level ML models.

- Deployment: Use platforms like

WebandEdgeto roll out ML models seamlessly.

5. CI/CD & Infrastructure:

- CI/CD: Practices that automate stages like building, testing, and deploying applications.

- Infrastructure: AWS and Azure provide robust support for ML workloads. From compute power to storage, they have it all.

6. Security & Optimization:

- Security: An integral facet of MLOps. Ensure all data, models, and pipelines are secure.

- Optimization Tools: Tools like

ONNXandFIVIDIAcan significantly enhance model performance.

🌟 Conclusion:

This overview covers a sample MLOps tech stack, primarily centered around AWS. However, the optimal tools and services you decide on will hinge on your project’s distinct needs. The world of MLOps is expansive, and its growth is only accelerating. Choose wisely, and build efficiently! 🌐🤖📈